Supporting Students with Better Assessments

Watch the Recording Listen to the Podcast

We know assessment is a tool that helps teachers to inform personalized learning, differentiate instruction, accelerate learning, and ultimately support healthy learning mindsets. But all this happens only when assessments are valid and reliable. Or, more simply, when assessments are good.

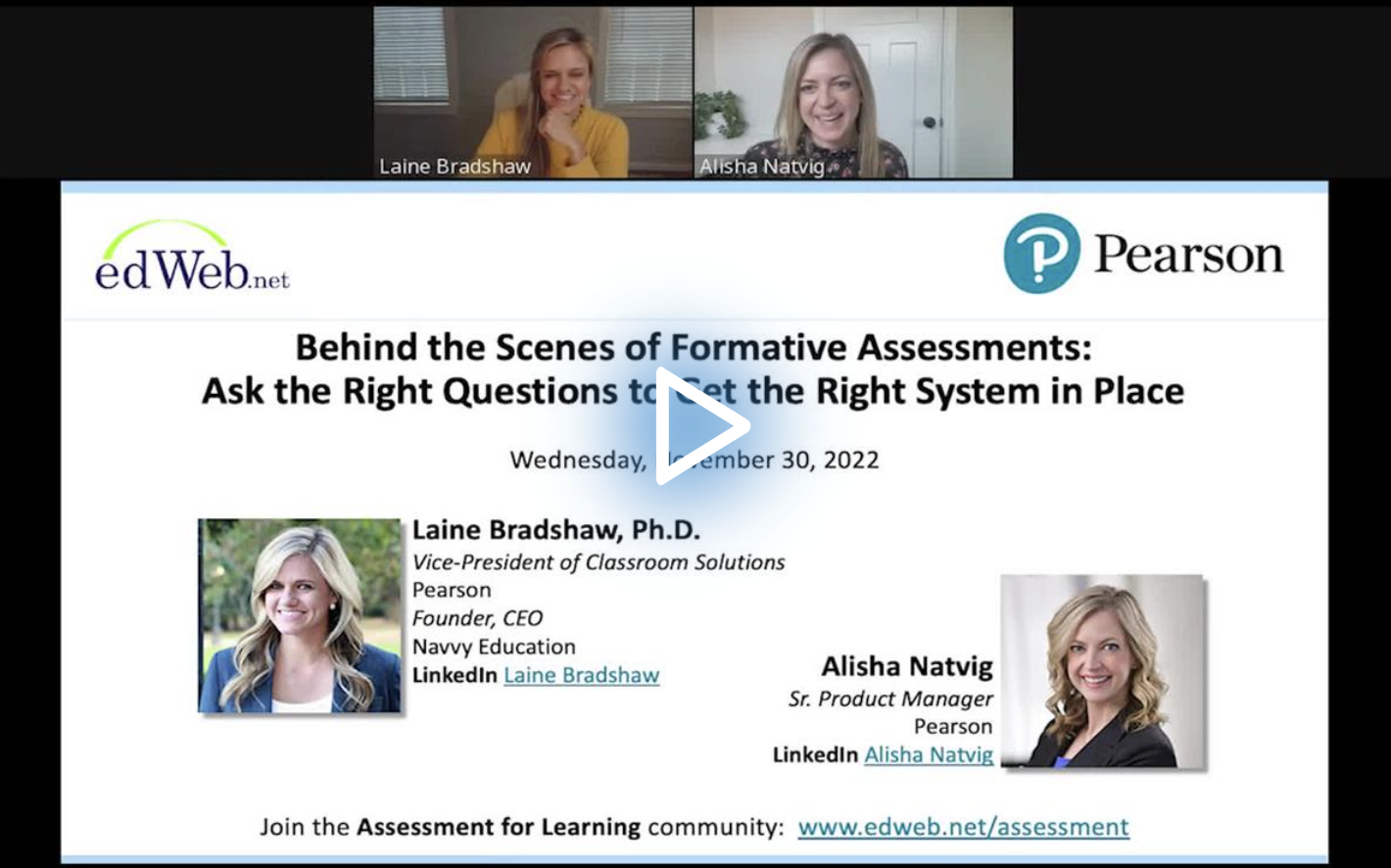

In the edLeader Panel. “Behind the Scenes of Formative Assessments: Ask the Right Questions to Get the Right System in Place,” Dr. Laine Bradshaw, Vice President of Classroom Solutions at Pearson and Navvy assessment system founder, described ways to evaluate, create, and select data-driven assessment systems that effectively identify students’ learning needs to inform teachers’ instructional decisions.

The Fundamental Assessment Challenge

“Millions of decisions are made across classrooms every day,” explained Bradshaw. “If we could start refining those decisions to be based more accurately and closely aligned to what students need, I think we could truly start to see increases in student learning.”

The challenge, she said, is creating assessments that inform instructional decisions and supports.

Classroom assessments tend to be more granular, connected to the proximity of learning and informing micro-level, high-frequency instructional decisions. Teachers can find smaller grain sizes at standards levels, enabling them to tailor useful and relevant “grain-size” lessons meetings specific learning needs.

Summative and interim assessments report and support domain-level information that drives macro-level curricula or program decisions; they do not give standards-level information. The result is the absence of personalized or differentiated instruction that addresses learners’ unique academic needs.

What’s missing across assessment types overall, contended Bradshaw, are actionable (learning evidence), accurate, and timely data, each telling teachers what students know and don’t know to inform appropriate instruction.

Assessment Design: What to Think About

The design of assessments is a multi-layered process. Asking questions focused on the critical data features per assessment type guides the creation or selection of quality assessments that reap essential data.

When thinking about granularity, consider whether the grain size of the learning evidence is small enough to act on. Teachers should question if the information in an assessment report provides sufficient guidance about what to do next to support students.

And while granularity focuses on specific areas, it can also pose issues of validity and reliability. Specific questions should be top of mind in this regard:

- Is there evidence that supports interpretations of assessment results for a purpose?

- Do the results mean what you think they mean for what you’re about to use them?

- What is the reliability of the result (its corresponding recommendation for a particular student)?

- Is the learning evidence you care about and want to act on reliable enough to guide your resources and time?

- Do we have the proper representation and rigor to measure the correct targets?

Results can “bounce around,” explained Bradshaw, because of the varied ways teachers develop assessments (types of questions, mix of concepts, mix of cognitive complexity, number of questions, etc., which alter scoring), cut scores based on a total score (typically not providers of reliable results), determine the difficulty of questions (often confounded with the decision of competency), and design reassessments to check for understanding of a standard.

The impact on learning is substantial: Inadequate assessment might result in not providing students with the support they need and bolstering students who need less reinforcement.

While interim and summative assessments are less reliable regarding standards-focused questions, they provide psychometric analysis, making results more reliable in domain and subject areas.

“If you can’t trust the results or if they don’t mean what you think they mean or if they’re not consistent,” explained Bradshaw, “then acting on them could misguide instruction instead of guide instruction. So granular evidence must be reliable.”

Another consideration is an assessment’s proximity to learning, which requires 1) that the assessment is ready to be administered, 2) that its results are quickly available to act on, and 3) and that it guides the teacher in updating the way they track what students know.

Psychometrics

Classroom assessment can benefit from psychometrics, which initiates a shared understanding of how to conceive of and evaluate competency to move beyond cut scores toward efficient groupings that inform the academic support students require.

Psychometrics, according to Bradshaw, helps teachers and leaders leverage data science to get the data they need to design effective, detailed, reliable, and consistent assessments. For example, aligning psychometrics with diagnostic profiles can shape teachers’ decisions about instruction and learning.

The overall benefits of psychometrics? It can help teachers evaluate item quality and classification of students. It will preserve the meaning of competency when there is a need for reassessment, whether using the same or different assessments for a specific concept.

“We’re making that diagnosis from the model and kind of transitioning that language or conception of assessment,” explained Bradshaw, “transitioning from who knows more than whom to who knows what. We’re taking a content reference approach to determine [whether a student] understands this content.”

Psychometrics must also be paired with a careful design to ensure validity. For example, for more detailed results standard by standard (or concept by concept), it’s crucial to use a blueprint to map out the standard to build assessments and questions that measure the correct targets, garner evidence, and are coordinated to yield a valid and reliable result.

Thus, being strategic about what to assess—and how—is essential and involves the following steps:

- Knowing what to measure (i.e., a standard) and, once targeted, operationalizing what is to be measured by further defining it (granularity)

- Adding boundaries to determine what and what not to include in the measurement

- Breaking down and deconstructing the measurement target to focus on specific content and complexity features

- Deciding on what proportion of items or the assessment should be represented by a given cognitive complexity level and content component representative of rigor and content in the original blueprint

- Being mindful of validity threats when moving from the blueprint to the assessment:

- Underrepresentation of parts that should be measured and measuring something outside of what students understand. This happens, for example, when using vocabulary beyond a student’s grade level or in a language they don’t understand. Doing this adds irrelevant factors. In the case of vocabulary, for instance, if you are measuring math but introduce new words, then you are also measuring reading, threatening the assessment’s validity.

- Writing limited level 3 Depth of Knowledge (DOK) questions. Often, level 1 and 2 questions are overrepresented, leading to greater success on classroom assessments but lower success rates on state assessments.

Learn more about this edWeb broadcast, “Behind the Scenes of Formative Assessments: Ask the Right Questions to Get the Right System in Place,” sponsored by Pearson.

Watch the Recording Listen to the Podcast

Join the Community

Assessment for Learning is a free professional learning community where educators can learn from top experts about effective assessment practices.

Pearson’s innovative classroom assessment system, Navvy, helps K-12 educators correctly navigate and successfully advance student progress on required learning objectives. Navvy was designed by Laine Bradshaw, PhD, in collaboration with school district leaders and educators who share a vision for supporting the teacher’s ongoing formative-assessment process with trustworthy, timely, standards-level competency diagnoses that are delivered and used in healthy ways for students.

Blog post by Michele Israel, based on this edLeader Panel

Comments are closed.